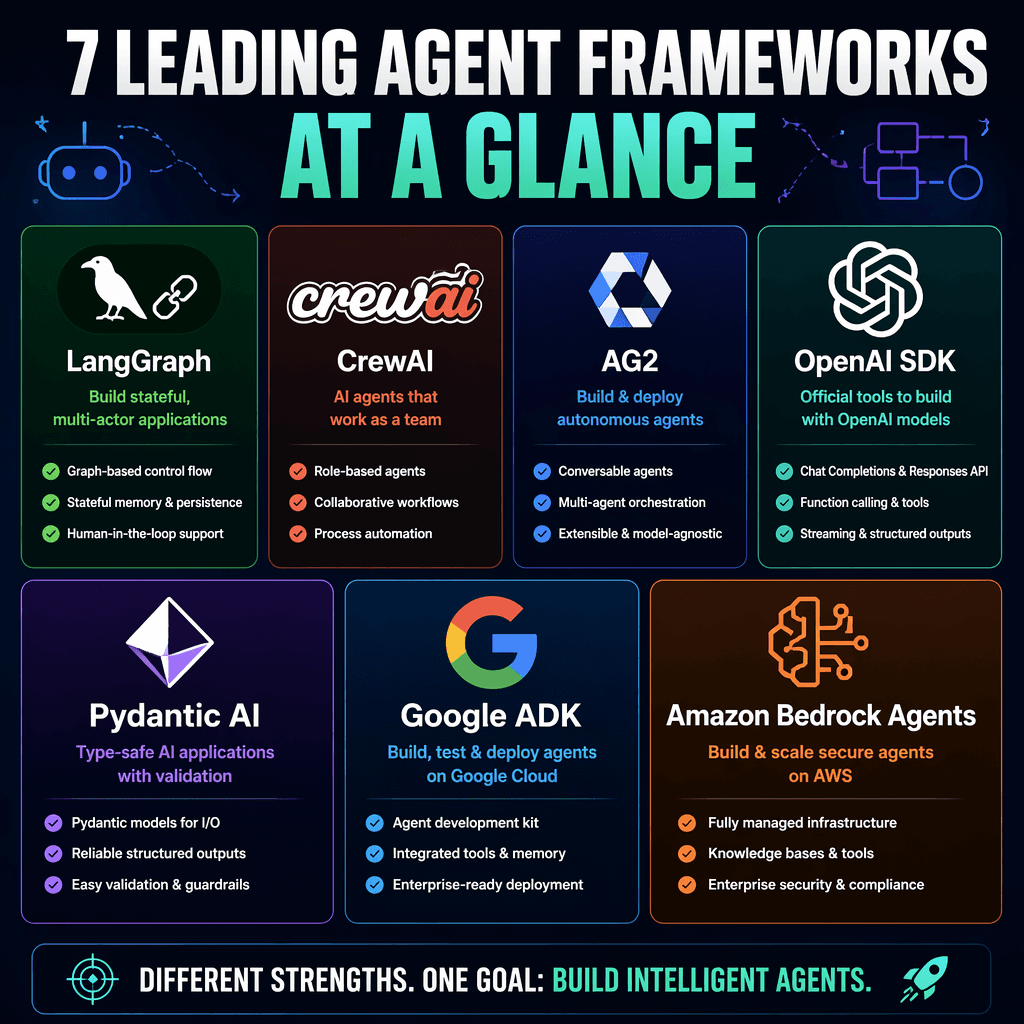

The AI agent ecosystem has rapidly evolved, with frameworks like LangGraph, CrewAI, AG2, OpenAI Agents SDK, Pydantic AI, Google ADK, and Amazon Bedrock Agents leading the charge. Each framework offers distinct advantages, but choosing the right one depends on your specific engineering needs, team expertise, and deployment goals.

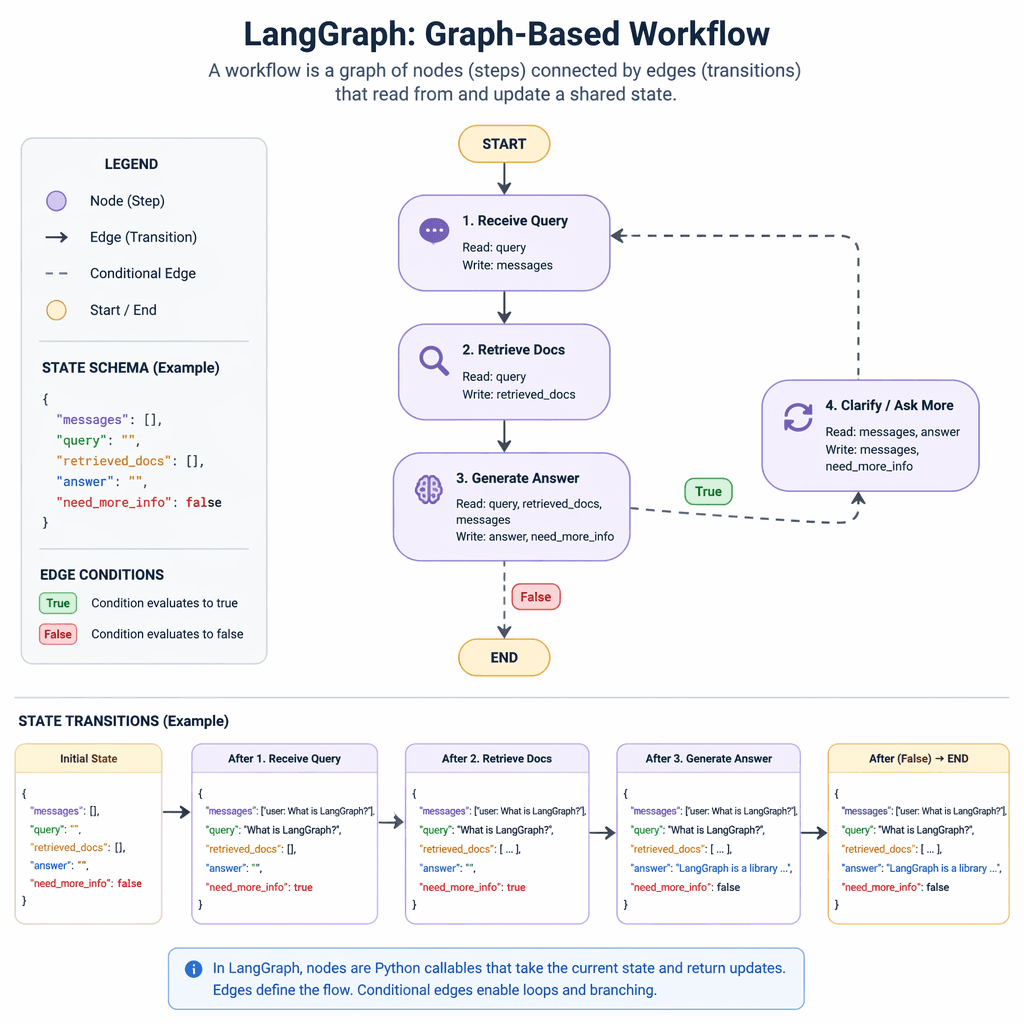

LangGraph: Precision for Production Workflows

LangGraph is a graph-based framework designed for production-grade workflows. It allows developers to define agent workflows as directed graphs, enabling explicit control over state transitions, branching, and human-in-the-loop processes. While its verbosity and steep learning curve may deter casual users, LangGraph excels in durability and observability, particularly when paired with LangSmith for debugging and tracing.

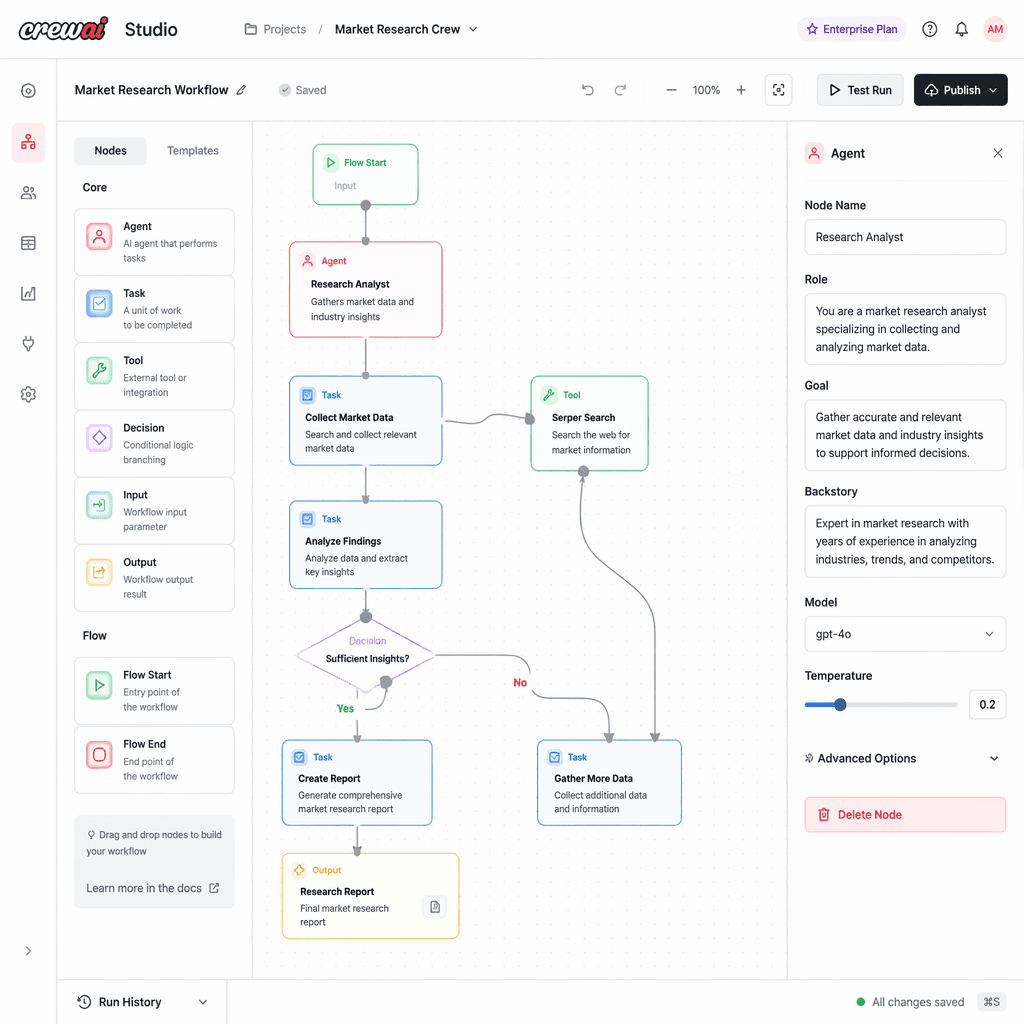

CrewAI: Rapid Prototyping and Deployment

CrewAI simplifies multi-agent development with its 'crew' abstraction, where agents are assigned roles and tasks. Its visual editor, CrewAI Studio, empowers non-technical stakeholders to design workflows. While ideal for rapid prototyping, its high-level abstractions can limit control and transparency in complex systems. Enterprise features like RBAC and PII masking enhance its appeal for production use.

AG2: Conversable Agents for Experimentation

AG2, formerly AutoGen, focuses on structured conversations between agents. Its recent redesign introduces streaming architectures, dependency injection, and typed tools, making it suitable for research and experimentation. However, its production readiness remains lower compared to other frameworks, and self-managed observability can be a challenge.

OpenAI Agents SDK: Ecosystem Integration

OpenAI Agents SDK is tailored for developers working within the OpenAI ecosystem. It offers features like session persistence, retry policies, and an any-LLM adapter. While its ease of use and integration with OpenAI models make it attractive, its partial model agnosticism may limit flexibility for teams using diverse providers.

Pydantic AI: Type-Safe Agent Development

Pydantic AI emphasizes type safety and composability with its Capabilities and AgentSpec features. It supports over 25 providers and offers adaptive tools for tasks like web search and image generation. While its structured approach suits production environments, its moderate ease of use may require more upfront investment in learning.

Google ADK: Workflow Delegation at Scale

Google ADK leverages graph-based workflows and a Task API for structured delegation between agents. Its integration with Google Cloud services like BigQuery and Vertex AI makes it ideal for teams already invested in the Google ecosystem. However, its alpha-stage features may require caution for mission-critical deployments.

Amazon Bedrock Agents: Enterprise-Grade AI

Amazon Bedrock Agents focus on enterprise deployments with features like IAM, VPC integration, and HIPAA compliance. While its managed nature simplifies scaling, it may lack the flexibility of open-source frameworks for teams seeking granular control.

Key Takeaways

- LangGraph is ideal for production workflows requiring durability and explicit state control.

- CrewAI excels in rapid prototyping but may limit transparency in complex systems.

- AG2 is best suited for research and experimentation, with lower production readiness.

- OpenAI Agents SDK integrates seamlessly with OpenAI models but is less flexible for multi-provider setups.

- Pydantic AI offers type-safe, composable agent development for structured environments.

- Google ADK supports scalable workflows but requires caution due to alpha-stage features.

- Amazon Bedrock Agents simplify enterprise deployments but may lack customization.

Choosing the right AI agent framework is not just about features, but about aligning with your team's goals and expertise.

Builder note

Consider your team's familiarity with graph-based workflows, type-safe development, or managed services when selecting a framework. Each option has trade-offs in control, scalability, and ease of use.

Source Card

Definitive Guide to Agentic Frameworks in 2026: Langgraph, CrewAI, AG2 ...This guide provides a comprehensive comparison of seven leading AI agent frameworks, highlighting their features, adoption contexts, and engineering trade-offs.

softmaxdata.com

| Signal | Why it matters |

|---|---|

| LangGraph's graph-based state machines | Offers granular control for production workflows. |

| CrewAI's visual editor | Simplifies multi-agent design for non-technical stakeholders. |

| AG2's streaming architecture | Enhances experimentation with event-driven agents. |

| OpenAI SDK's any-LLM adapter | Improves flexibility within the OpenAI ecosystem. |

| Pydantic AI's Capabilities | Enables reusable, composable agent behaviors. |

| Google ADK's Task API | Supports scalable agent-to-agent delegation. |

| Amazon Bedrock's managed services | Simplifies enterprise-grade AI deployments. |

- Evaluate your team's familiarity with graph-based or sequential workflows.

- Consider the importance of type safety and composability for your agents.

- Assess the need for managed services versus open-source flexibility.

- Factor in integration with existing cloud ecosystems like AWS or Google Cloud.

- Prioritize frameworks with robust observability and debugging tools.

- LangGraph: Best for durable production workflows.

- CrewAI: Ideal for rapid prototyping and deployment.

- AG2: Suitable for research and experimentation.

- OpenAI SDK: Seamless integration with OpenAI models.

- Pydantic AI: Structured, type-safe agent development.

- Google ADK: Scalable workflows for Google Cloud users.

- Amazon Bedrock: Enterprise-grade managed AI services.

- Definitive Guide to Agentic Frameworks in 2026: Langgraph, CrewAI, AG2 ... - softmaxdata.com

Builder implications

For teams evaluating Navigating the Latest AI Agent Frameworks: LangGraph, CrewAI, AG2, and Beyond, the useful question is not whether the announcement sounds important. The useful question is whether it changes how an agent system is built, tested, operated, or bought. The source from softmaxdata.com gives builders a concrete signal to inspect: Definitive Guide to Agentic Frameworks in 2026: Langgraph, CrewAI, AG2 .... That signal should be mapped against the parts of an agent stack that usually become fragile first, including tool contracts, long-running state, evaluation coverage, cost visibility, failure recovery, and the handoff between prototype code and production operations.

Production lens

Treat this as a systems decision, not a headline decision. A builder should ask how the change affects the agent loop, what needs to be measured, which failure modes become easier to catch, and whether the team can explain the behavior to a customer or operator when something goes wrong. If the answer is vague, the technology may still be useful, but it is not yet a production advantage.

Adoption checklist

- Identify the workflow where AI agent frameworks, LangGraph, CrewAI, AG2 already creates measurable pain, such as slow triage, brittle handoffs, unclear ownership, or poor observability.

- Write down the current baseline before changing the stack: latency, cost per run, recovery rate, review time, and the percentage of tasks that need human correction.

- Prototype against a real internal workflow instead of a demo task. The workflow should include imperfect inputs, missing context, tool failures, and at least one approval step.

- Add traces, event logs, and evaluation checkpoints before expanding usage. A new framework or model is hard to judge when the team cannot see where the agent made its decision.

- Keep rollback boring. The first version should let an operator pause automation, inspect the last decision, and return control to a human without losing state.

- Review the source again after testing. The source-backed claim should line up with observed behavior in your own environment, not just with launch copy or release notes.

| Area | Question | Practical test |

|---|---|---|

| Reliability | Does the agent fail in a way operators can understand? | Run the same task with missing data, stale data, and a tool timeout. |

| Observability | Can the team reconstruct why a decision happened? | Inspect traces for inputs, tool calls, model outputs, approvals, and final state. |

| Cost | Does value scale faster than usage cost? | Compare cost per successful task against the old human or scripted workflow. |

| Governance | Can sensitive actions be reviewed or blocked? | Require approval on high-impact actions and log who approved the step. |

What to watch next

The next signal to watch is whether builders start publishing implementation notes, migration stories, benchmarks, or reliability reports around this source. That secondary evidence matters because agent infrastructure often looks clean at release time and only shows its real shape once teams connect it to messy business workflows. Strong follow-on evidence would include reproducible examples, clear limits, documented failure recovery, and customer stories that describe what changed in the operating model.

Key Takeaways

- Do not treat a release as automatically production-ready because it comes from a strong source.

- Use the source as a reason to test a specific workflow, not as a reason to rewrite the entire stack.

- The best early signal is not novelty. It is whether the system becomes easier to observe, recover, and improve.