AI agent frameworks are the backbone of autonomous systems, enabling engineers to focus on agent logic while abstracting away the complexities of orchestration, tool integration, and state management. In 2026, the landscape of frameworks has matured, offering diverse options tailored to specific use cases and technical preferences. This article dives into the latest engineering releases, key features, and practical considerations for selecting the right framework for your projects.

What Makes AI Agent Frameworks Essential?

An AI agent framework is a code library that provides the essential building blocks for creating autonomous systems. These frameworks handle the orchestration loop-observe, decide, act, reflect-allowing developers to focus on defining agent behavior rather than managing state transitions, tool calls, or error recovery. Unlike no-code solutions, frameworks require programming expertise but offer unparalleled control and flexibility, making them indispensable for production-grade systems.

Key Engineering Considerations

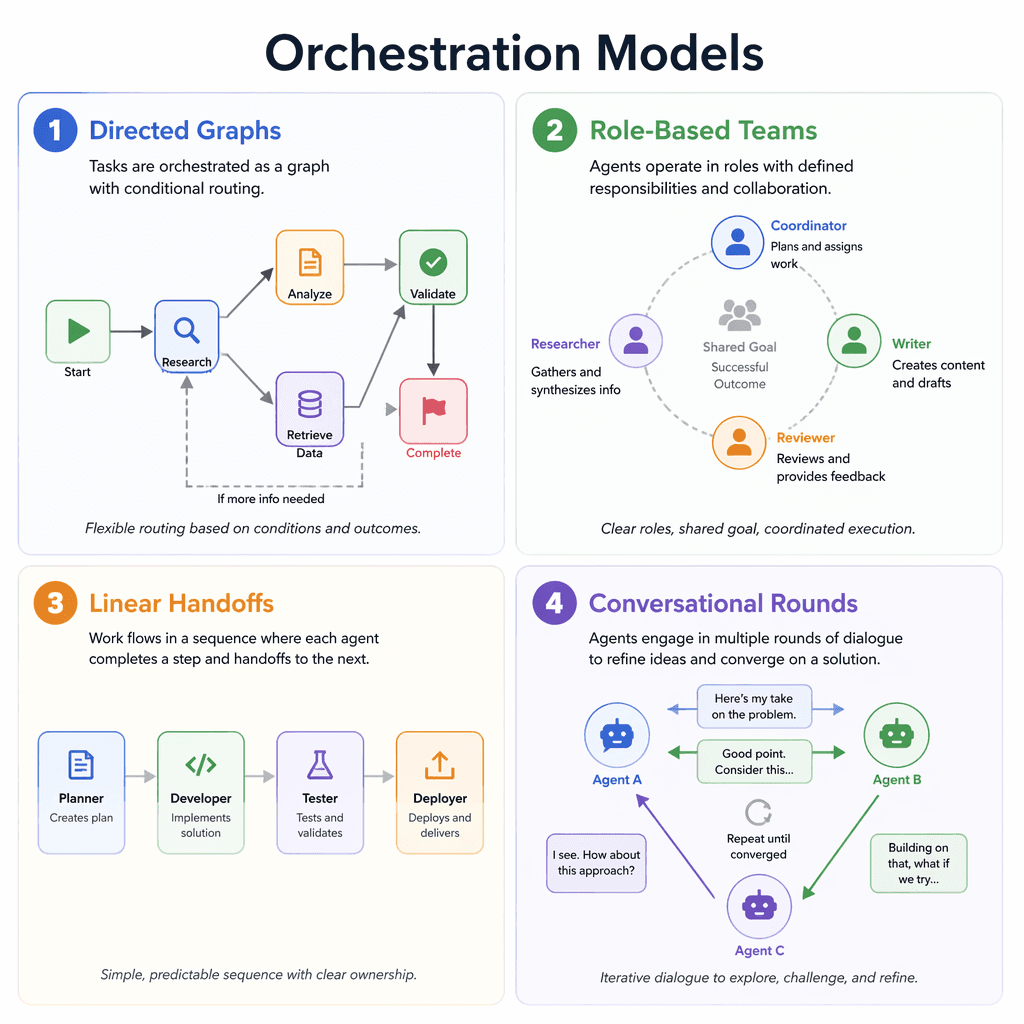

Framework choice is a high-stakes decision. Switching frameworks mid-project can be costly due to deep coupling with agent logic, tool definitions, and memory architectures. Engineers must evaluate frameworks based on three critical factors: orchestration model, ecosystem lock-in, and production readiness. For example, LangGraph’s directed graph model is powerful but complex, while CrewAI’s role-based design is intuitive but less flexible. Similarly, OpenAI Agents SDK and Anthropic Agent SDK tie users to specific LLM providers, whereas LangGraph and CrewAI offer model-agnostic flexibility.

Core Features to Evaluate

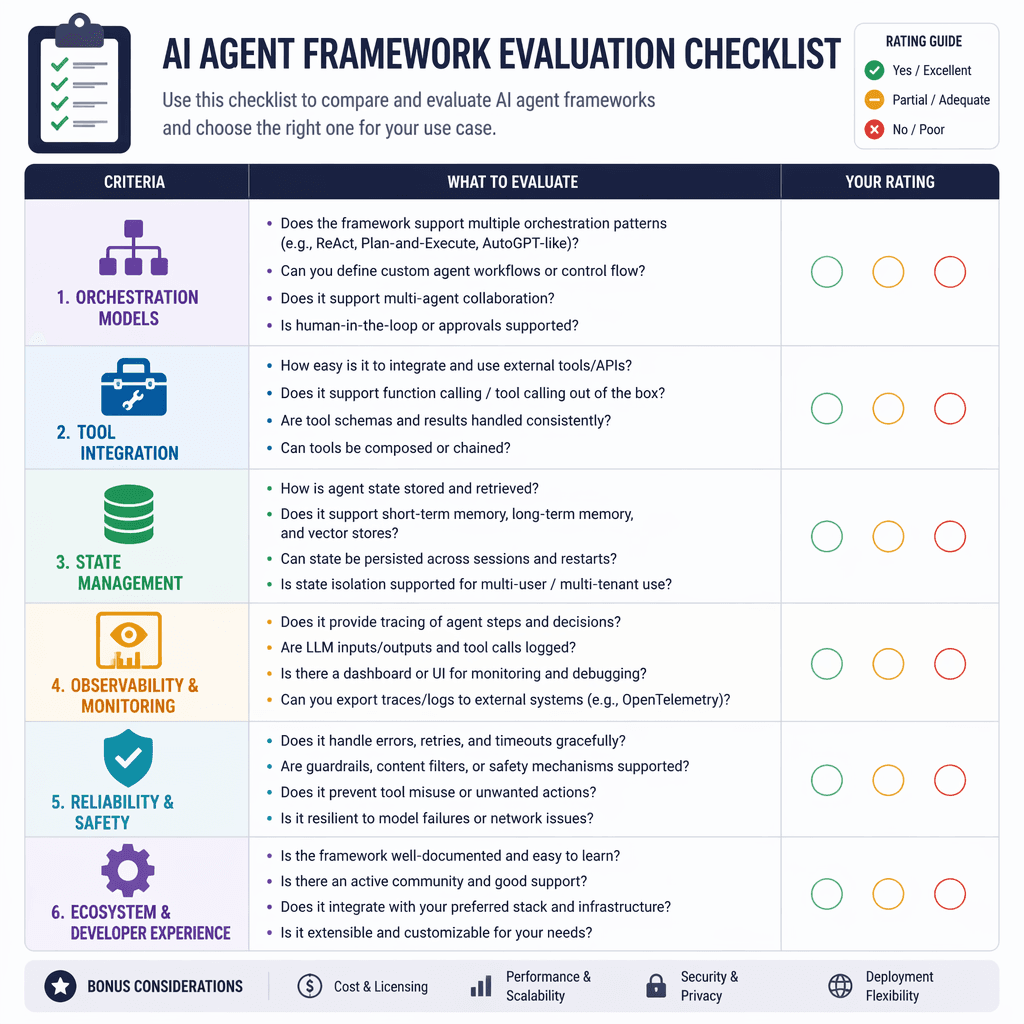

When choosing a framework, prioritize features that align with your use case. Essential capabilities include orchestration models, tool integration, state management, and observability. Multi-agent support and model agnosticism are increasingly important for complex workflows. For example, LangGraph excels in state persistence and error recovery, while CrewAI simplifies multi-agent coordination through role-based design. Observability tools like tracing and logging are critical for debugging and optimizing agent behavior.

Key Takeaways

- Framework choice impacts scalability and long-term viability.

- Evaluate orchestration models based on workflow complexity.

- Model agnosticism provides flexibility in LLM provider selection.

- Observability tools are essential for debugging and optimization.

- Production readiness should align with deployment goals.

"The wrong framework can lock you into design constraints or vendor ecosystems, limiting your agent's potential."

Builder note

Before committing to a framework, prototype your agent's core workflows to ensure compatibility with the orchestration model and tool integration capabilities.

Source Card

Best AI Agent Frameworks in 2026 | Expert GuideThis guide provides a comprehensive comparison of the top frameworks used in production workflows, highlighting their strengths, weaknesses, and ideal use cases.

Toolradar

| Signal | Why it matters |

|---|---|

| Orchestration model | Determines how agents make decisions and handle workflows. |

| Tool integration | Enables seamless interaction with external APIs and services. |

| State management | Ensures reliability in long-running workflows. |

| Model agnosticism | Provides flexibility to switch LLM providers. |

| Observability | Facilitates debugging and performance optimization. |

- Define your agent's primary workflows and complexity.

- Evaluate orchestration models for compatibility.

- Check for native tool integration and MCP support.

- Assess state management capabilities for reliability.

- Ensure observability tools meet debugging needs.

- Consider model agnosticism for vendor flexibility.

- Verify production readiness for deployment.

- LangGraph: Best for complex, stateful workflows.

- CrewAI: Ideal for role-based multi-agent systems.

- OpenAI Agents SDK: Fastest route for OpenAI users.

- Anthropic Agent SDK: Tight Claude integration.

- AutoGen: Great for research and prototyping.

- Mastra: TypeScript-native with modern features.

- Semantic Kernel: Enterprise-grade for Azure users.

Framework Comparison and Tradeoffs

LangGraph offers unparalleled flexibility with its directed graph model, making it ideal for complex workflows requiring branching logic and error recovery. However, its steep learning curve and additional costs for LangSmith services may deter smaller teams. CrewAI simplifies multi-agent workflows with role-based design but is limited to Python and requires a paid cloud tier for production deployment. OpenAI Agents SDK is perfect for teams committed to OpenAI models, offering minimal abstraction and fast setup, but its linear handoff model lacks flexibility. Anthropic Agent SDK excels in MCP integration and reasoning transparency but is locked to Claude models. AutoGen is a strong choice for research but lacks production-grade observability. Mastra stands out for TypeScript developers, offering modern features like RAG pipelines, but its youth means fewer community resources. Semantic Kernel is enterprise-ready but heavily Azure-centric, making it less appealing for teams outside the Microsoft ecosystem.

Mistakes to Avoid

Avoid choosing a framework that mismatches your use case. For instance, LangGraph is overkill for simple chatbots, while AutoGen’s maintenance mode makes it unsuitable for long-term projects. Similarly, frameworks tied to specific LLM providers can limit your ability to adapt to changing model costs or capabilities. Always prototype workflows to validate compatibility before committing to a framework.

- https://toolradar.com/guides/best-ai-agent-frameworks

Builder implications

For teams evaluating Navigating AI Agent Frameworks: Engineering Insights for 2026, the useful question is not whether the announcement sounds important. The useful question is whether it changes how an agent system is built, tested, operated, or bought. The source from toolradar.com gives builders a concrete signal to inspect: Best AI Agent Frameworks in 2026 | Expert Guide | Toolradar. That signal should be mapped against the parts of an agent stack that usually become fragile first, including tool contracts, long-running state, evaluation coverage, cost visibility, failure recovery, and the handoff between prototype code and production operations.

Production lens

Treat this as a systems decision, not a headline decision. A builder should ask how the change affects the agent loop, what needs to be measured, which failure modes become easier to catch, and whether the team can explain the behavior to a customer or operator when something goes wrong. If the answer is vague, the technology may still be useful, but it is not yet a production advantage.

Adoption checklist

- Identify the workflow where AI agent frameworks, engineering, tool integration, state management already creates measurable pain, such as slow triage, brittle handoffs, unclear ownership, or poor observability.

- Write down the current baseline before changing the stack: latency, cost per run, recovery rate, review time, and the percentage of tasks that need human correction.

- Prototype against a real internal workflow instead of a demo task. The workflow should include imperfect inputs, missing context, tool failures, and at least one approval step.

- Add traces, event logs, and evaluation checkpoints before expanding usage. A new framework or model is hard to judge when the team cannot see where the agent made its decision.

- Keep rollback boring. The first version should let an operator pause automation, inspect the last decision, and return control to a human without losing state.

- Review the source again after testing. The source-backed claim should line up with observed behavior in your own environment, not just with launch copy or release notes.

| Area | Question | Practical test |

|---|---|---|

| Reliability | Does the agent fail in a way operators can understand? | Run the same task with missing data, stale data, and a tool timeout. |

| Observability | Can the team reconstruct why a decision happened? | Inspect traces for inputs, tool calls, model outputs, approvals, and final state. |

| Cost | Does value scale faster than usage cost? | Compare cost per successful task against the old human or scripted workflow. |

| Governance | Can sensitive actions be reviewed or blocked? | Require approval on high-impact actions and log who approved the step. |

What to watch next

The next signal to watch is whether builders start publishing implementation notes, migration stories, benchmarks, or reliability reports around this source. That secondary evidence matters because agent infrastructure often looks clean at release time and only shows its real shape once teams connect it to messy business workflows. Strong follow-on evidence would include reproducible examples, clear limits, documented failure recovery, and customer stories that describe what changed in the operating model.

Key Takeaways

- Do not treat a release as automatically production-ready because it comes from a strong source.

- Use the source as a reason to test a specific workflow, not as a reason to rewrite the entire stack.

- The best early signal is not novelty. It is whether the system becomes easier to observe, recover, and improve.